|

Jaeik Kim I'm an M.S. student at AIDAS Lab, Seoul National University, advised by Jaeyoung Do. Research Keywords: Diffusion Language Models / Omnimodal Models / Robotics / Model Editing & Personalization Email / Google Scholar / GitHub / LinkedIn |

|

News

|

Publications |

|

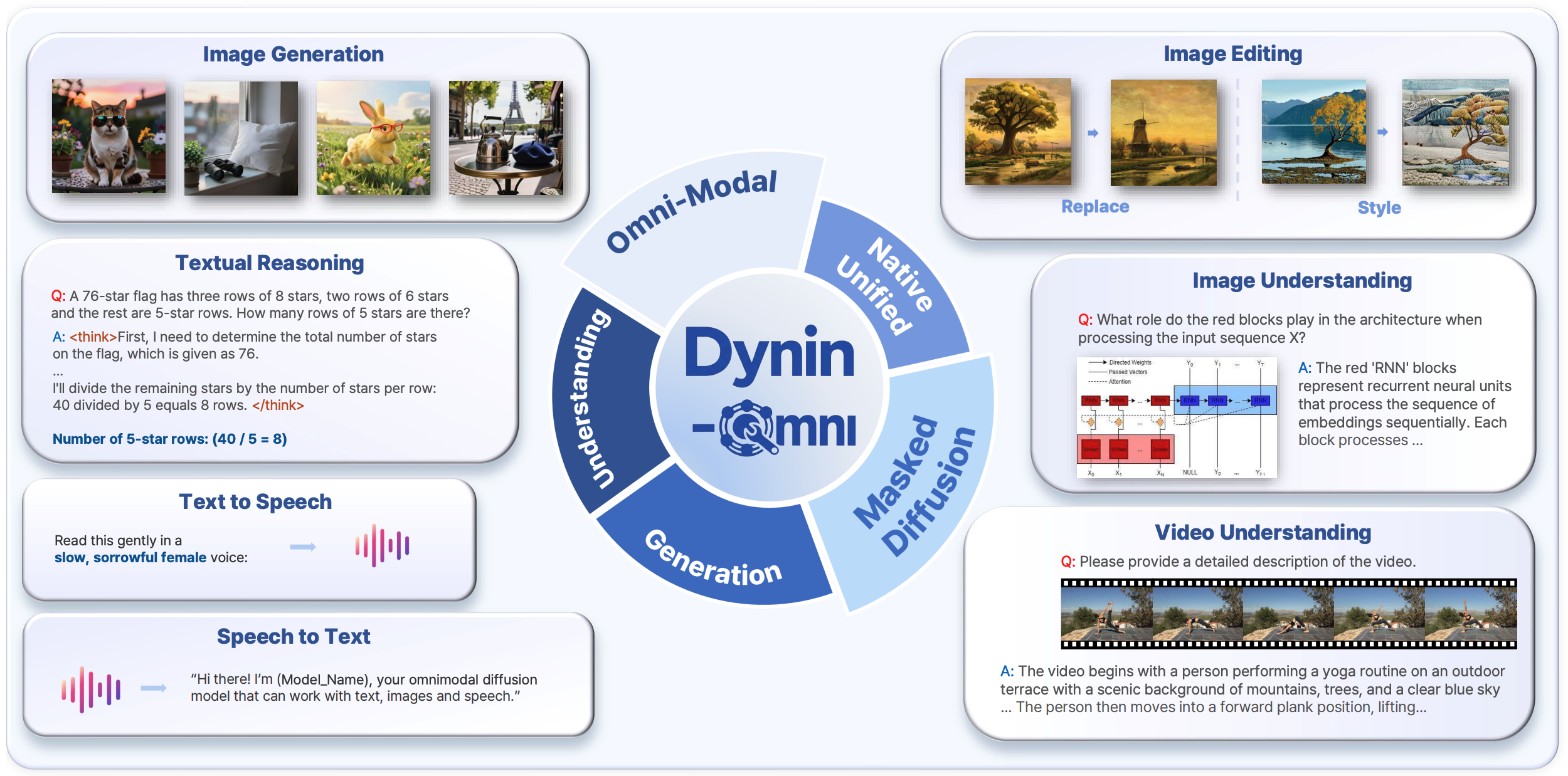

Dynin-Omni: Omnimodal Unified Large Diffusion Language Model

Jaeik Kim, Woojin Kim, Jihwan Hong, Yejoon Lee, Sieun Hyeon, Mintaek Lim, Yunseok Han, Dogeun Kim, Hoeun Lee, Hyunggeun Kim, Jaeyoung Do arXiv preprint, 2026 project page / paper / code / model / demo |

|

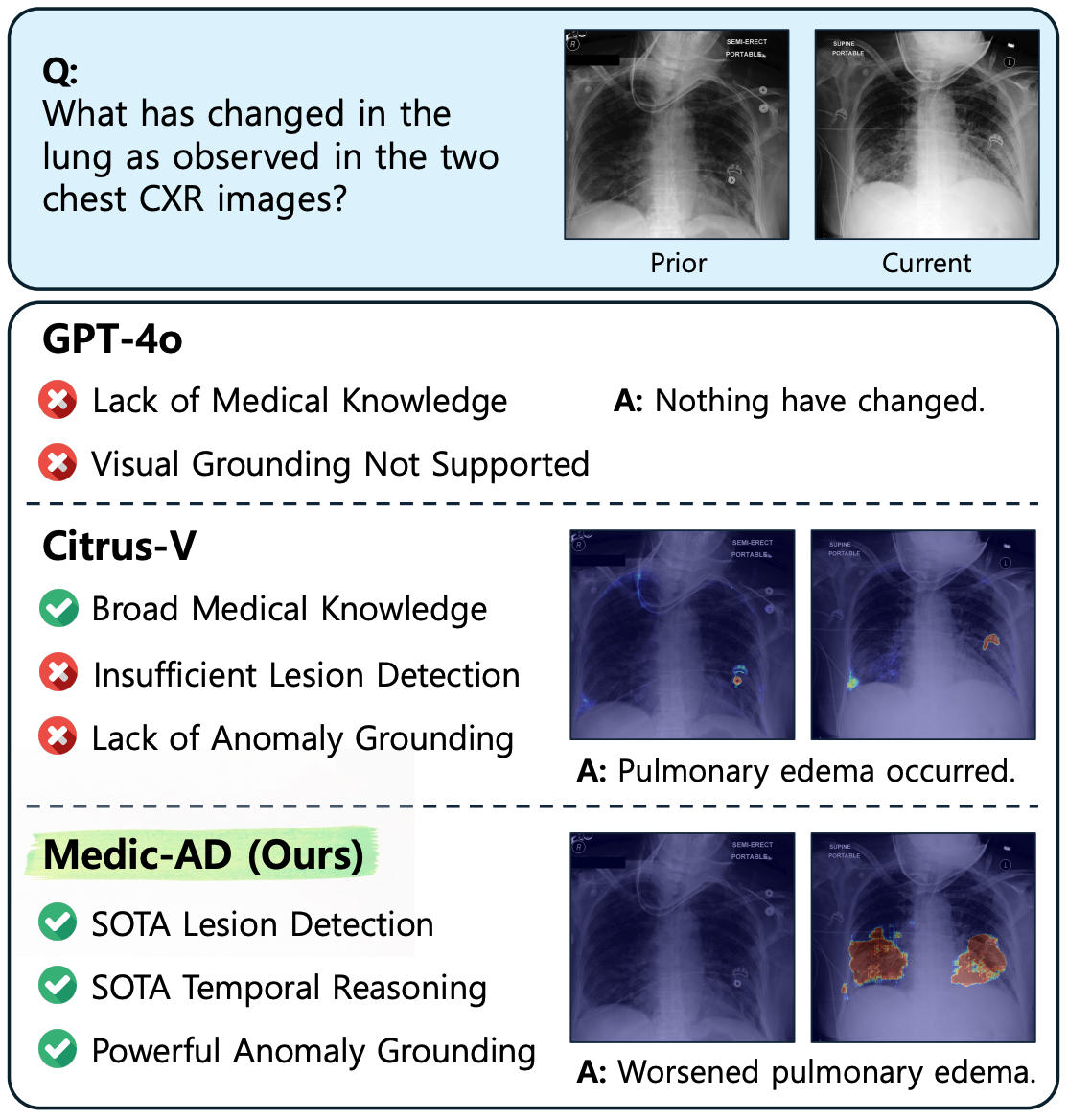

Medic-AD: Towards Medical Vision-Language Model's Clinical Intelligence

Woohyeon Park, Jaeik Kim, Sunghwan Steve Cho, Pa Hong, Woo Kyoung Jeong, Yoojin Nam, Nam-Joon Kim, Ginny Wong, Ka Chun Cheung, Jaeyoung Do CVPR, 2026 (Oral presentation) paper |

|

MMPB: It's Time for Multi-Modal Personalization

Jaeik Kim, Woojin Kim, Woohyeon Park, Jaeyoung Do NeurIPS, 2025 project page / paper / dataset |

|

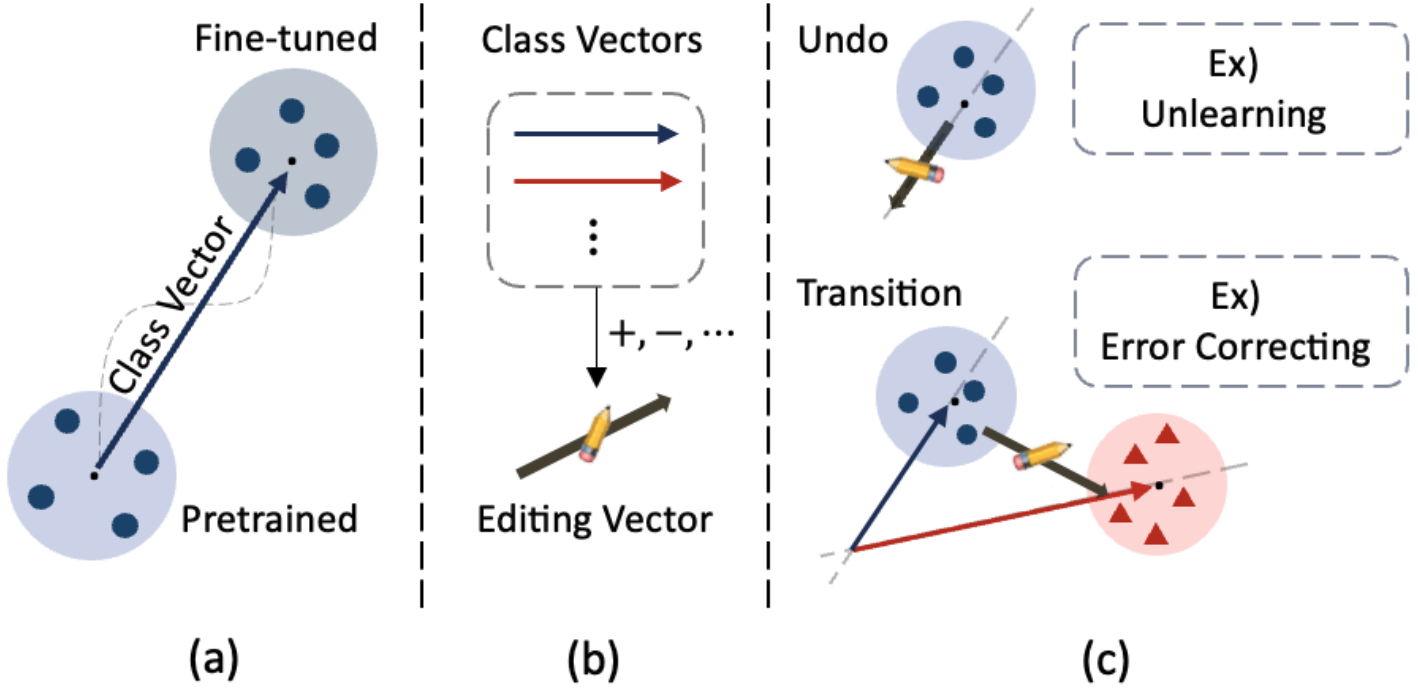

Exploring and Leveraging Class Vectors for Classifier Editing

Jaeik Kim, Jaeyoung Do NeurIPS, 2025 project page / paper |

|

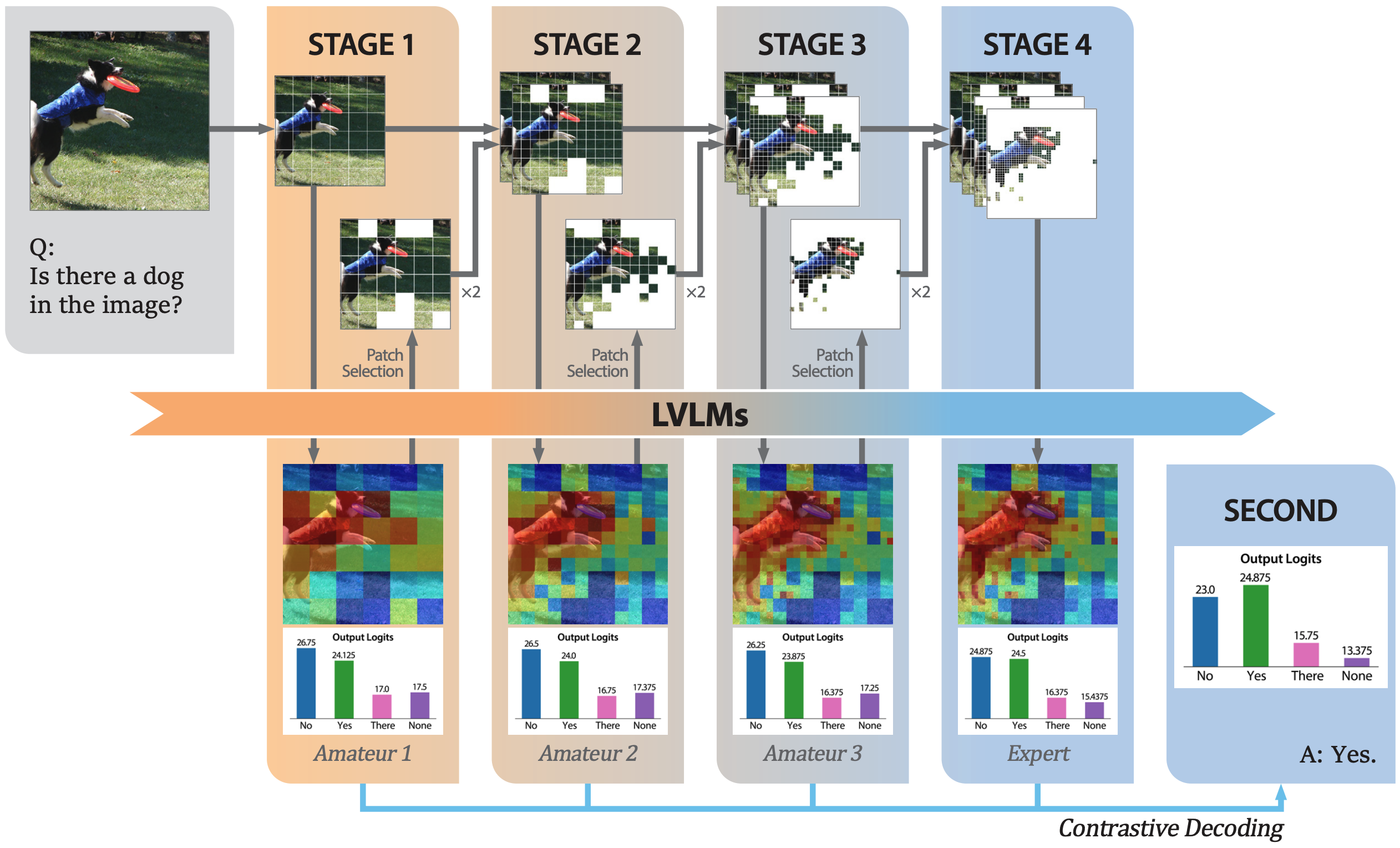

SECOND: Mitigating Perceptual Hallucination in Vision-Language Models via Selective and Contrastive Decoding

Woohyeon Park, Woojin Kim, Jaeik Kim, Jaeyoung Do ICML, 2025 project page / paper |